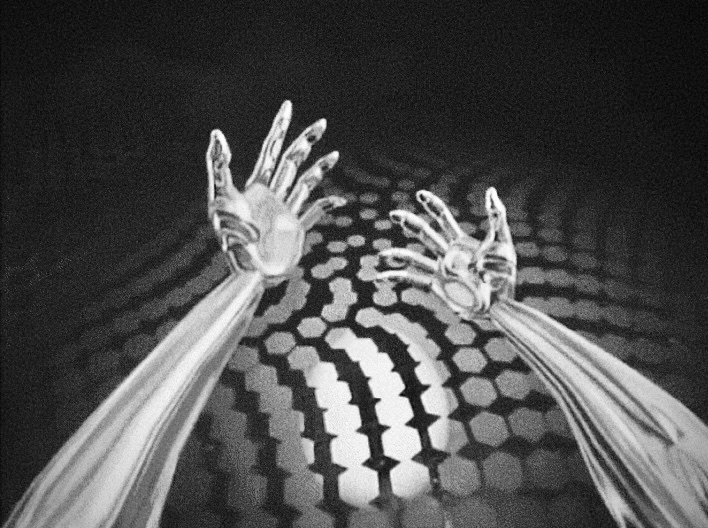

One of the goals we set from the beginning with T.E.A.M. was to make the VR experience as natural as possible, even for users without previous experience with virtual reality headsets. In this regard, the use of the Oculus Quest headset has been almost mandatory. Besides the fact that it’s the only VR headset on the market today capable of providing a complete VR experience (with 6 degrees of freedom) without the need to be connected to an external PC, the Quest also allows – thanks to four cameras that scan and process the physical space all around – to trace the user’s hands in real time, using them as controllers directly within the VR environment. “So great!”, we said to ourselves, “We can make digital experiences without worrying about cables and external sensors, and above all we can allow the user to use their own hands!”

Category: Behind the Scenes

This article describes some methods for the optimization of the Grasshopper definitions aimed at reaching a relationship between control parameters and geometry movements that is as quick and as smooth as possible.

This kind of optimization in GH could not be considered crucial if the purpose is to define a fixed shape that derives from a certain configuration of parameters. In this common scenario, a delay of a few milliseconds in the computation between one variation and another during the form-finding process should not cause a big headache to the designer. What we intend to do here, though, is real-time streaming of potentially always-changing computed geometries within the VR scene. Why are we doing this?